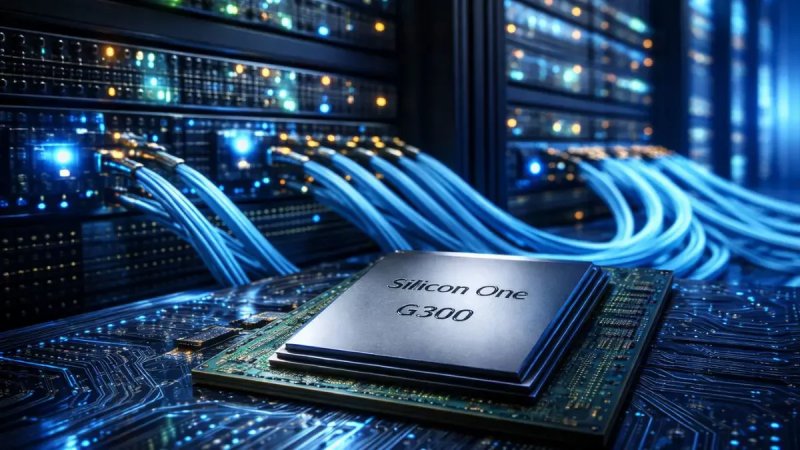

The announcement signals Cisco’s intent to compete directly with established AI infrastructure leaders such as Nvidia and Broadcom. Rather than building a general purpose processor, Cisco is targeting one of the most critical bottlenecks in AI systems the network fabric that connects thousands of accelerators and servers.

RECOMMENDED STORIES

The chip is optimized for high bandwidth low latency and intelligent traffic management. In practical terms it is designed to move massive volumes of data between AI accelerators without congestion or unpredictable delays. This capability is increasingly decisive as AI models scale from billions to trillions of parameters and require thousands of interconnected compute units working in parallel.

Why networking chips matter so much for AI

AI performance is no longer defined only by how fast a single processor can compute. Modern training and inference workloads depend on clusters of accelerators operating as a single system. In these environments the network is effectively the nervous system. If data moves too slowly or arrives out of order overall performance collapses regardless of how powerful the individual chips may be.

Traditional data center networking was designed for web traffic storage access and enterprise workloads. AI workloads behave very differently. They involve continuous synchronized exchanges of model parameters gradients and activation data. This creates intense east west traffic inside the data center and places extreme pressure on switches and interconnects.

Cisco’s move acknowledges a structural shift in computing. Networking is no longer a supporting component but a central determinant of AI efficiency cost and scalability.

What makes Cisco’s AI networking chip different

Cisco positions the chip as purpose built for AI clusters rather than a repurposed Ethernet switch processor. It integrates advanced traffic scheduling congestion control and telemetry capabilities that are tuned for distributed machine learning workloads.

One key feature is deterministic performance. AI training jobs often fail or slow dramatically if network latency varies even slightly. Cisco claims the chip can maintain predictable data delivery even under peak loads by prioritizing synchronized traffic flows and dynamically rerouting packets around congestion points.

Another differentiator is deep programmability. The chip is designed to work tightly with software defined networking tools allowing operators to adapt network behavior to specific AI frameworks and workloads. This flexibility is intended to reduce the need for proprietary interconnects while still delivering near custom performance.

How this challenges Nvidia’s dominance

Nvidia currently dominates AI infrastructure not only through its GPUs but also through its networking portfolio. Its acquisition of Mellanox gave it control over high performance interconnects that tightly integrate with its accelerators. This vertical integration has become a major competitive advantage.

Cisco’s strategy is to challenge that advantage at the network layer. By offering an AI optimized networking chip that works across heterogeneous environments Cisco aims to appeal to customers who want to avoid lock in to a single vendor ecosystem.

If successful this approach could weaken the assumption that the best AI performance requires a fully Nvidia controlled stack. Instead it opens the possibility of mixing accelerators networking and software from different suppliers without sacrificing efficiency.

Where Broadcom fits into the picture

Broadcom is a major supplier of merchant silicon for data center switches. Many cloud providers rely on Broadcom chips paired with custom software to build their networks. Broadcom’s strength lies in scale cost efficiency and proven reliability.

Cisco’s announcement puts pressure on Broadcom by emphasizing specialization. While Broadcom offers powerful general purpose switch chips Cisco is betting that AI workloads demand more tailored solutions. The competition therefore becomes a debate between flexible merchant silicon and vertically integrated AI aware networking hardware.

This rivalry is likely to intensify as hyperscalers decide whether to continue customizing Broadcom based designs or adopt more specialized chips that promise faster time to value for AI deployments.

How cloud providers and enterprises may respond

For hyperscale cloud providers the decision will revolve around performance per dollar and operational control. Cisco’s chip could appeal to providers seeking predictable AI performance without developing extensive in house networking software. The ability to integrate AI aware features directly into the hardware may reduce engineering complexity and deployment time.

Enterprises adopting AI at smaller scales may also benefit. Many organizations lack the expertise to tune networks for distributed AI workloads. A turnkey networking solution designed specifically for AI could lower the barrier to entry and make advanced AI systems more accessible outside the largest cloud platforms.

However adoption will depend on ecosystem support including compatibility with popular AI frameworks orchestration tools and existing data center infrastructure.

What this means for data center architecture

Cisco’s move reinforces a broader trend toward disaggregated yet tightly coordinated data center components. Compute storage and networking are increasingly co designed around AI workloads rather than treated as independent layers.

Future data centers may be planned around network topology first with compute placed where data movement is most efficient. This represents a reversal of traditional design logic and elevates networking architects to a central role in AI strategy.

If Cisco’s chip delivers on its promises it could accelerate this shift and influence how new AI focused facilities are built from the ground up.

What are the risks and challenges for Cisco

Entering the AI silicon race carries significant risk. Nvidia’s ecosystem is deeply entrenched and Broadcom’s chips are widely deployed and trusted. Cisco must prove that its solution delivers measurable real world gains not just theoretical advantages.

Another challenge is timing. AI infrastructure evolves rapidly and customers expect fast iteration. Cisco will need to maintain a competitive roadmap and ensure its chip keeps pace with growing bandwidth and latency demands.

Finally success depends on software. Hardware alone is not enough. Cisco must provide robust tools and integrations that allow customers to fully exploit the chip’s capabilities without excessive complexity.

Why this announcement matters beyond Cisco

This launch signals that AI infrastructure competition is expanding beyond accelerators into every layer of the stack. Networking once considered mature and commoditized is now a frontier for innovation.

As more companies enter this space customers may gain greater choice and bargaining power. This could slow the consolidation of AI infrastructure around a single dominant vendor and encourage more open and interoperable systems.

In the long term this competition may reduce costs improve performance and accelerate the adoption of AI across industries.

What to watch next

The next critical milestone will be early deployments and benchmarks. Customers will want evidence that Cisco’s chip can match or exceed the performance of existing AI networking solutions at scale.

Partnership announcements will also be telling. Integrations with major cloud providers AI software platforms and server manufacturers will indicate how broadly the chip is expected to be adopted.

Ultimately the success of Cisco’s AI networking chip will be judged not by the announcement itself but by whether it reshapes how AI data centers are built and who controls the flow of data inside them.